Google is scrapping FAQ rich results: what it means for your website

May 15, 2026 Posted by Sean Walsh Round-Up 0 thoughts on “Google is scrapping FAQ rich results: what it means for your website”Google confirmed last week that it will no longer support FAQ rich results in search. The expandable question-and-answer panels that appeared directly beneath certain search listings are being removed, along with the Search Console features that allowed webmasters to monitor their performance. For any business that invested time in implementing the FAQ schema, this is worth understanding clearly: what is changing, what it means in practice, and whether any of that investment needs to be redirected.

The short answer is that this change is less damaging than it might initially sound. But there are some useful lessons in it about how to think about structured data more broadly as Google continues to adjust what it surfaces and how.

What FAQ rich results actually were

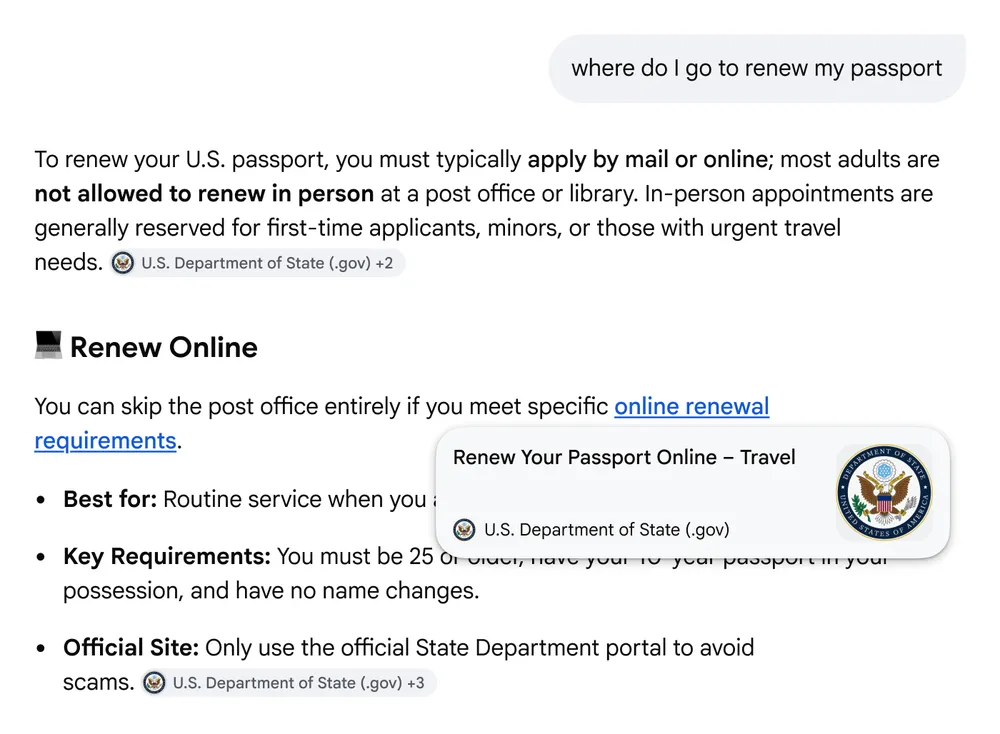

FAQ rich results were the expandable accordions that appeared below a search listing, showing individual question-and-answer pairs pulled directly from a page’s structured data. When implemented correctly using FAQ schema markup, they could significantly expand a search listing’s visual footprint on the page, making it more prominent without requiring a higher ranking.

Google started restricting FAQ rich results in 2023, limiting them to government and health websites. The full removal confirmed last week completes that process. The associated Search Console report, which showed impressions and clicks from FAQ rich results, will be deprecated alongside the feature itself.

How much does this actually matter?

For most businesses, the honest answer is: less than it might appear. By 2023, Google had already restricted FAQ rich results to a narrow category of sites, which means the vast majority of businesses had not been benefiting from them in search results for some time. The visual expansion of a listing was a genuine competitive advantage when the feature was widely available, but that window closed a few years ago.

What is changing now is the formal retirement of a feature that was already largely inactive for commercial websites. The Search Console report being removed is a minor practical inconvenience if you were still tracking FAQ impressions, but the disappearance of the report does not reflect a loss of current traffic or visibility for most sites.

Should you remove your FAQ schema?

Not necessarily, and in many cases the answer is no. This is an important distinction. FAQ schema no longer produces rich result panels in standard Google Search, but structured data serves multiple purposes beyond generating visual enhancements in the search results page.

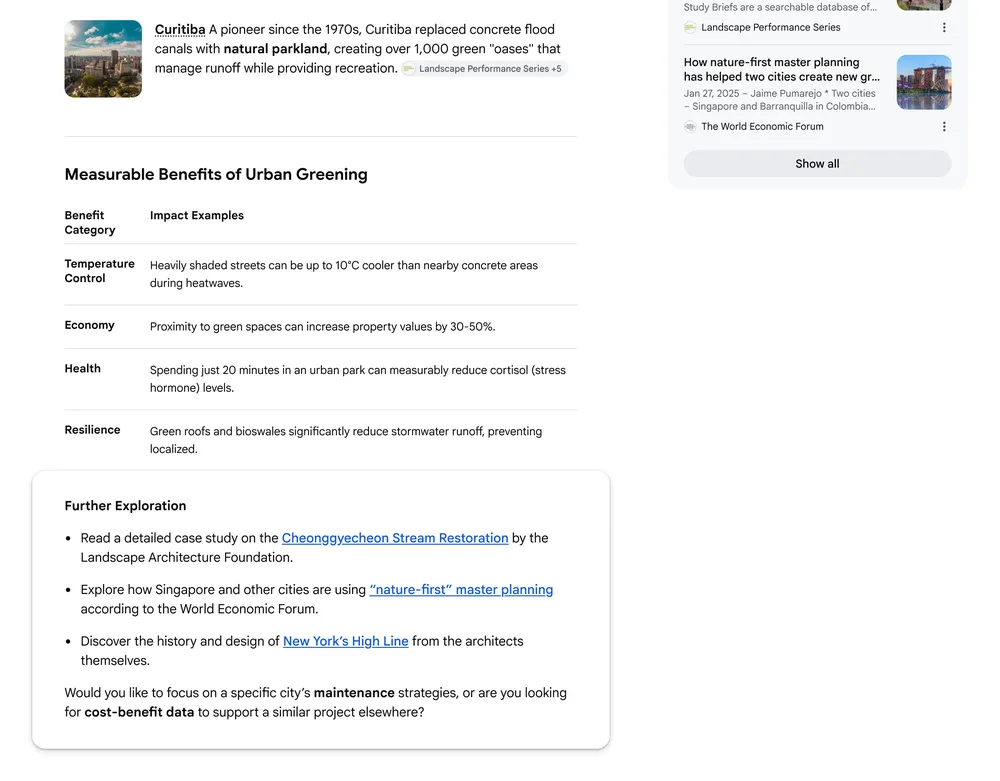

As we covered recently when looking at the tools worth using for AEO, structured data is one of the cleaner signals available for AI retrieval. FAQ schema specifically provides AI systems with a machine-readable layer of question-and-answer content that can inform how those systems cite and use your content in AI-generated answers, entirely separate from whether Google renders it as a rich result in traditional search.

The argument for keeping well-implemented FAQ schema in place is straightforward. It costs nothing to maintain, it provides clarity to search engines and AI systems about the purpose and structure of your content, and removing it does not guarantee any benefit. Unless your FAQ schema is technically broken or generating errors in Search Console, the case for removing it is weak.

What to redirect your structured data efforts towards

If this news prompts a review of how structured data is implemented on your site, that is a worthwhile exercise. The specific types of schema that continue to produce rich results in Google Search and that are increasingly relevant for AI retrieval are worth prioritising. The most consistently valuable include:

- Review schema. Star ratings in search results remain one of the highest-impact visual enhancements available and are directly relevant to businesses in sectors such as healthcare, dental, aesthetics and professional services where social proof influences decisions.

- Local business schema. For businesses with physical locations, accurate and complete local business schema reinforces the information shown in Google Business Profiles and supports consistent citation across AI search systems. This is particularly important for multi-location businesses and sectors where local search intent is high.

- Product schema. For e-commerce businesses, product schema supporting price, availability and review information continues to produce rich results in both standard search and Shopping. This remains one of the most directly commercial schema types available.

- Article and breadcrumb schema. These support how content pages are understood and indexed, contributing to cleaner crawling and more accurate representation in search results, and remain relevant for content-heavy sites.

- How-to and event schema. Both continue to produce rich results for relevant content types and are worth implementing where the content justifies it.

The broader pattern worth noting

The removal of FAQ rich results is part of a longer pattern. Google has been progressively reducing the variety of rich result types it surfaces in standard search as AI Overviews and AI Mode take up more of the results page. The shift towards AI-generated answers changes what it means to be visible in search. The visual real estate previously occupied by FAQ panels, knowledge panels and similar features is increasingly being consumed by AI-generated content instead.

This does not mean structured data is becoming less important. If anything, the opposite is true. As AI systems take a more active role in assembling answers from multiple sources, the clarity and accuracy of the signals you provide about your content become more valuable, not less. The mechanism by which those signals produce a visible result in search is changing. The underlying importance of giving search engines and AI systems well-structured, accurate, machine-readable information is not.

What to do now

A few practical steps are worth taking in light of this change.

- Check your Search Console for any FAQ rich result errors or warnings. These will stop being reported when the feature is deprecated, but addressing any existing errors is good practice before the report disappears.

- Audit your current structured data implementation more broadly. If FAQ schema was the only schema type in use on your site, now is a good time to review whether review, local business or product schema should be added where applicable.

- Do not remove FAQ schema solely because of this change. If your FAQ schema is well-implemented and error-free, leave it in place. Its value in an AI retrieval context is not affected by Google’s decision to stop rendering it as a rich result.

- Focus new structured data investment on the types that continue to produce visible results: review, local business, product and event schema, depending on what is relevant to your business.

The disappearance of FAQ rich results is worth knowing about, but it is not a reason to panic or make sweeping changes. It is a reminder that search is a changing environment and that the value of any single technical feature is always temporary. The underlying principle, making your content clear, accurate and well-structured, remains constant regardless of which specific features Google chooses to support at any given time.